\[ \newcommand{\bv}[1]{\boldsymbol{\mathbf{#1}}} \]

Click here to download the Rmd file: week11-predictions-in-MLM.Rmd

Load Packages and Import Data

# To install a package, run the following ONCE (and only once on your computer)

# install.packages("psych")

library(here) # makes reading data more consistent

library(tidyverse) # for data manipulation and plotting

library(haven) # for importing SPSS/SAS/Stata data

library(lme4) # for multilevel analysis

library(glmmTMB)

library(cAIC4) # for conditional AIC

library(glmmLasso) # for MLM lasso

library(MuMIn) # for model averaging

library(modelsummary) # for making tables

library(interactions) # for interaction plots

theme_set(theme_bw()) # Theme; just my personal preference

The data is the first wave of the Cognition, Health, and Aging Project.

# Download the data from

# https://www.pilesofvariance.com/Chapter8/SPSS/SPSS_Chapter8.zip, and put it

# into the "data_files" folder

zip_data <- here("data_files", "SPSS_Chapter8.zip")

# download.file("https://www.pilesofvariance.com/Chapter8/SPSS/SPSS_Chapter8.zip",

# zip_data)

stress_data <- read_sav(

unz(zip_data,

"SPSS_Chapter8/SPSS_Chapter8.sav"))

stress_data <- stress_data %>%

# Center mood (originally 1-5) at 1 for interpretation (so it becomes 0-4)

# Also women to factor

mutate(mood1 = mood - 1,

women = factor(women, levels = c(0, 1),

labels = c("men", "women")))

The data is already in long format. Let’s first do a subsample of 30 participants:

# First, separate the time-varying variables into within-person and

# between-person levels

stress_sub <- stress_sub %>%

group_by(PersonID) %>%

# The `across()` function can be used to operate the same procedure on

# multiple variables

mutate(across(c(symptoms, mood1, stressor),

# The `.` means the variable to be operated on

list("pm" = ~ mean(., na.rm = TRUE),

"pmc" = ~ . - mean(., na.rm = TRUE)))) %>%

ungroup()

Let’s use the model from last week

Level 1: \[\text{symptoms}_{ti} = \beta_{0i} + \beta_{1i} \text{mood1_pmc}_{ti} + e_{ti}\] Level 2: \[ \begin{aligned} \beta_{0i} & = \gamma_{00} + \gamma_{01} \text{mood1_pm}_{i} + \gamma_{02} \text{women}_i + \gamma_{03} \text{mood1_pm}_{i} \times \text{women}_i + u_{0i} \\ \beta_{1i} & = \gamma_{10} + \gamma_{11} \text{women}_i + u_{1i} \end{aligned} \]

- \(\gamma_{03}\) = between-person interaction

- \(\gamma_{11}\) = cross-level interaction

m1 <- glmmTMB(

symptoms ~ (mood1_pm + mood1_pmc) * women + (mood1_pmc | PersonID),

data = stress_sub, REML = TRUE,

# The default optimizer did not converge; try optim

control = glmmTMBControl(

optimizer = optim,

optArgs = list(method = "BFGS")

)

)

Predictions

# Cluster-specific

(obs1 <- stress_sub[1, c("PersonID", "mood1_pm", "mood1_pmc", "women",

"symptoms")])

># # A tibble: 1 × 5

># PersonID mood1_pm mood1_pmc women symptoms

># <dbl> <dbl> <dbl> <fct> <dbl>

># 1 103 0 0 women 0(pred1 <- predict(m1, newdata = obs1))

># [1] 0.3192# Unconditional/marginal prediction

predict(m1, newdata = obs1, re.form = NA)

># [1] 0.922Prediction Error

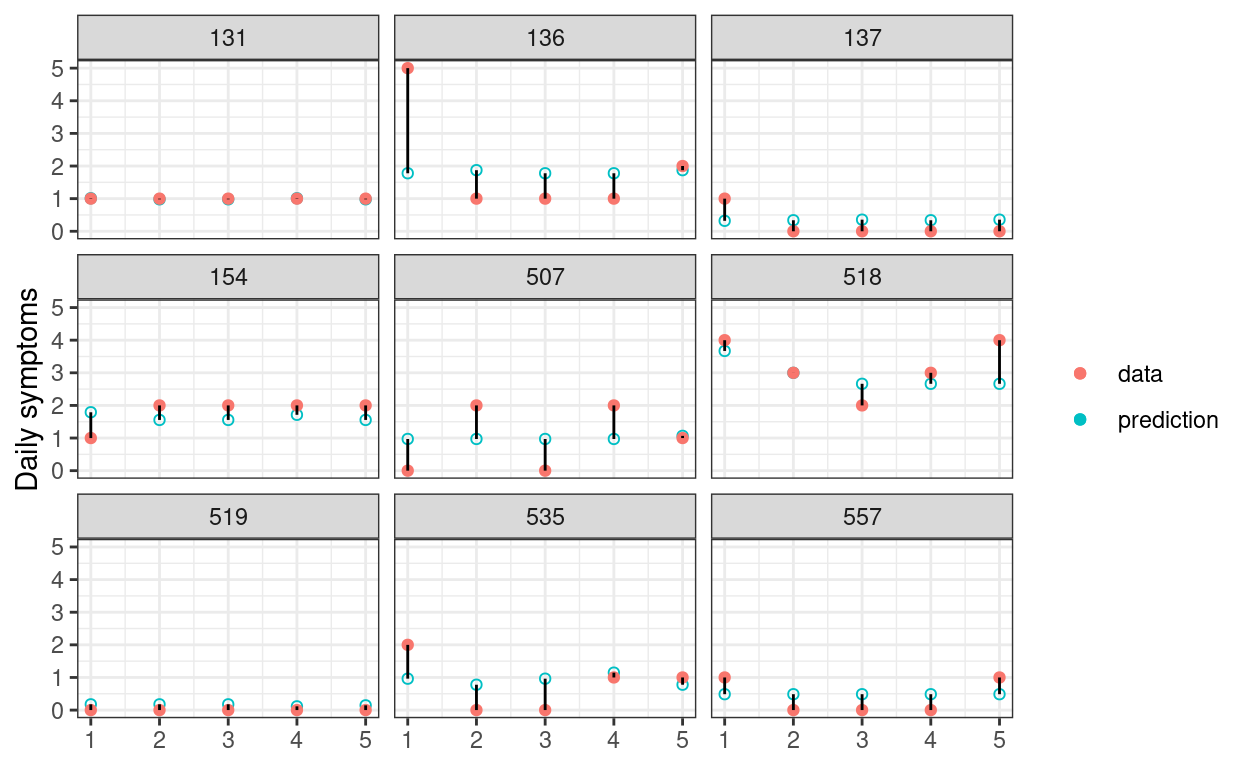

In the graph below, the 68% predictive intervals are shown in skyblue, whereas the actual data are shown in red. A good statistical model should have good preditive accuracy so that the skyblue dots and the red dots are close; a valid statistical model should have most of the skyblue intervals covering the observed data.

broom.mixed::augment(m1) %>%

# Random sample of 9 Persons

filter(PersonID %in% sample(unique(PersonID), 9)) %>%

group_by(PersonID) %>%

# Add a variable to indicate observation number

mutate(obsid = row_number()) %>%

ungroup() %>%

ggplot(aes(x = obsid, y = .fitted)) +

geom_point(aes(col = "prediction"), shape = 21) +

geom_point(aes(y = symptoms, col = "data")) +

geom_segment(aes(xend = obsid, yend = symptoms)) +

facet_wrap(~PersonID) +

labs(x = NULL, y = "Daily symptoms", col = NULL)

Average In-Sample Prediction Error

Let’s consider the prediction error for everyone in the data

# Obtain predicted values for everyone

pred_all <- predict(m1, re.form = NA)

# Now, compute the prediction error

prederr_all <- m1$frame$symptoms - pred_all

# Statisticians love to square the prediction error. The mean of the squared

# prediction error is called the mean squared error (MSE)

(mse_m1 <- mean(prederr_all^2))

># [1] 1.037# The square root of MSE, the root mean squared error (RMSE), can be considered

# the average prediction error (marginal)

(rmse_m1 <- sqrt(mse_m1))

># [1] 1.018Let’s now consider a model with more predictors:

# 35 main/interaction effects

m2 <- glmmTMB(

symptoms ~ (mood1_pm + mood1_pmc) * (stressor_pm + stressor) *

(women + baseage + weekend) +

(mood1_pmc + stressor | PersonID),

data = stress_sub,

# The default optimizer did not converge; try optim

control = glmmTMBControl(

optimizer = optim,

optArgs = list(method = "BFGS")

)

)

The model does not converge. However, let’s ignore the warning for a second, and check the prediction error:

pred_all <- predict(m2, re.form = NA)

prederr_all <- m2$frame$symptoms - pred_all

mse_m2 <- mean(prederr_all^2)

tibble(Model = c("M1", "M2"),

`In-sample MSE` = c(mse_m1, mse_m2))

># # A tibble: 2 × 2

># Model `In-sample MSE`

># <chr> <dbl>

># 1 M1 1.04

># 2 M2 0.761You can see that the MSE drops with the more complex model. Does it mean that this more complex model should be preferred?

Out-Of-Sample Prediction Error

The problem of using in-sample prediction error to determine which model should be preferred is that the complex model will capture a lot of the noise in the data, making it not generalizable to other sample. In-sample prediction is not very meaningful, because if we already have the data, our interest is usually not to predict them. Instead, in research, we want models that will generalize to other samples. Therefore, learning all the noise in the sample is not a good idea, and will lead to overfitting—having estimates that are not generalizable to other samples.

To see this, let’s try to use the models we built on the 30

participants to predict symptoms for the remaining 75

participants:

# Get the remaining data

stress_test <- stress_data %>%

# Select participants not included in the previous models

filter(!PersonID %in% random_persons) %>%

# Person-mean centering

group_by(PersonID) %>%

# The `across()` function can be used to operate the same procedure on

# multiple variables

mutate(across(

c(symptoms, mood1, stressor),

# The `.` means the variable to be operated on

list(

"pm" = ~ mean(., na.rm = TRUE),

"pmc" = ~ . - mean(., na.rm = TRUE)

)

)) %>%

ungroup()

# Prediction error from m1

pred_all <- predict(m1,

newdata = stress_test, re.form = NA,

allow.new.levels = TRUE

)

prederr_all <- stress_test$symptoms - pred_all

mse_m1 <- mean(prederr_all^2, na.rm = TRUE)

# Prediction error from m2

pred_all <- predict(m2,

newdata = stress_test, re.form = NA,

allow.new.levels = TRUE

)

prederr_all <- stress_test$symptoms - pred_all

mse_m2 <- mean(prederr_all^2, na.rm = TRUE)

# Print out-of-sample prediction error

tibble(Model = c("M1", "M2"),

`Out-of-sample MSE` = c(mse_m1, mse_m2))

># # A tibble: 2 × 2

># Model `Out-of-sample MSE`

># <chr> <dbl>

># 1 M1 1.84

># 2 M2 2.47As you can see from above, the prediction on data not used for

building the model is worse. And m2 makes much worse

out-of-sample prediction than m1, because when the sample

size is small relative to the size of the model, a complex model is

especially prone to overfitting, as it has many parameters that can be

used to capitalize on the noise of the data.

As suggested before, we should care about out-of-sample prediction

error than in-sample prediction error, so in this case m1

should be preferred. In practice, however, we don’t usually have the

luxury of having another sample for us to get out-of-sample prediction

error. So what should we do?

Cross-Validation

A simple solution is cross-validation, which is extremely popular in machine learning. The idea is to split the data into two parts, just like what we did above. Then fit the model in one part, and get the prediction error on the other part. The process is repeated \(K\) times for a \(K\)-fold cross-validation until the prediction error is obtained on every observation.

It should be pointed out that \(K\)-fold cross-validation, gives a biased

estimate of prediction error especially when \(K\) is small, but it is extremely intensive

when \(K\) is large, as it requires

refitting the model \(K\) times. Below

is a demo for doing a 5-fold cross-validation on the training data (with

30 participants) with lme4::lmer() (you can do the same

with glmmTMB), which is mainly for you to understand its

logic.

# Split the index of 30 participants into 5 parts

num_folds <- 5

random_sets <- split(

random_persons,

rep_len(1:num_folds, length(random_persons))

)

# Initialize the sum of squared prediction errors for each model

sum_prederr_m1 <- sum_prederr_m2 <- 0

# Loop over each set

for (setk in random_sets) {

# Fit model 1 on the subset

fit_m1 <- lmer(

symptoms ~ (mood1_pm + mood1_pmc) * women + (mood1_pmc | PersonID),

data = stress_sub,

# Select specific observations

subset = !PersonID %in% setk

)

# Remaining data

stress_sub_test <- stress_sub %>% filter(PersonID %in% setk)

# Obtain prediction error

pred_m1_test <- predict(fit_m1, newdata = stress_sub_test, re.form = NA)

prederr_m1 <- stress_sub_test$symptoms - pred_m1_test

sum_prederr_m1 <- sum_prederr_m1 + sum(prederr_m1^2, na.rm = TRUE)

# Fit model 2 on the subset

fit_m2 <- lmer(

symptoms ~ (mood1_pm + mood1_pmc) * (stressor_pm + stressor) *

(women + baseage + weekend) +

(mood1_pmc + stressor | PersonID),

data = stress_sub,

# Select specific observations

subset = !PersonID %in% setk

)

# Remaining data

stress_sub_test <- stress_sub %>% filter(PersonID %in% setk)

# Obtain prediction error

pred_m2_test <- predict(fit_m2, newdata = stress_sub_test, re.form = NA)

prederr_m2 <- stress_sub_test$symptoms - pred_m2_test

sum_prederr_m2 <- sum_prederr_m2 + sum(prederr_m2^2, na.rm = TRUE)

}

# MSE (dividing the sum by 150 observations)

tibble(Model = c("M1", "M2"),

`5-fold CV MSE` = c(sum_prederr_m1 / 150, sum_prederr_m2 / 150))

># # A tibble: 2 × 2

># Model `5-fold CV MSE`

># <chr> <dbl>

># 1 M1 1.18

># 2 M2 2.95# The estimated out-of-sample MSE is more than double for m2 than for m1

Leave-one-out (LOO) cross validation

A special case of cross-validation is to use \(N - 1\) observations to build the model to

predict the remaining one observation, and repeat the process \(N\) times. This is the LOO, which is

essentially an \(N\)-fold cross

validation. While this may first seem very unrealistic given that the

model needs to be fitted \(N\) times,

there are computational shortcuts or approximations that can make this

much more efficient, and one of them that uses the Pareto smoothed

importance sampling (PSIS) is available for models fitted with

brms. So we can do

# Initialize the sum of squared prediction errors for each model

sum_prederr_m1 <- sum_prederr_m2 <- 0

# Loop over each person

for (personk in random_persons) {

# Fit model 1 on the data without personk

fit_m1 <- lmer(

symptoms ~ (mood1_pm + mood1_pmc) * women + (mood1_pmc | PersonID),

data = stress_sub,

# Select specific observations

subset = PersonID != personk

)

# Data for personk

stress_sub_test <- stress_sub %>% filter(PersonID == personk)

# Obtain prediction error

pred_m1_test <- predict(fit_m1, newdata = stress_sub_test, re.form = NA)

prederr_m1 <- stress_sub_test$symptoms - pred_m1_test

sum_prederr_m1 <- sum_prederr_m1 + sum(prederr_m1^2, na.rm = TRUE)

# Fit model 2 on the subset

fit_m2 <- lmer(

symptoms ~ (mood1_pm + mood1_pmc) * (stressor_pm + stressor) *

(women + baseage + weekend) +

(mood1_pmc + stressor | PersonID),

data = stress_sub,

# Select specific observations

subset = PersonID != personk

)

# Data for personk

stress_sub_test <- stress_sub %>% filter(PersonID == personk)

# Obtain prediction error

pred_m2_test <- predict(fit_m2, newdata = stress_sub_test, re.form = NA)

prederr_m2 <- stress_sub_test$symptoms - pred_m2_test

sum_prederr_m2 <- sum_prederr_m2 + sum(prederr_m2^2, na.rm = TRUE)

}

# MSE (dividing the sum by 150 observations)

tibble(Model = c("M1", "M2"),

`LOO CV MSE` = c(sum_prederr_m1 / 150, sum_prederr_m2 / 150))

># # A tibble: 2 × 2

># Model `LOO CV MSE`

># <chr> <dbl>

># 1 M1 1.23

># 2 M2 3.37# The results are similar

which again suggested m1 is expected to have less

out-of-sample prediction error. In practice, 5 or 10-fold CV is usually

used to save time.

A Remark on Cross-Validation for Hierarchical Data

With hierarchical data, cross-validation can be done at different levels. What we did before was to split the sample of clusters, i.e., CV at the top level (i.e., level 2, or person). This kind of CV is most relevant when one is interested in prediction at the cluster level. Another way to do CV is to split the data within clusters. For example, here each person has five time points max, so we can use four time points to build model to predict the remaining time point.

# Add time to the data

stress_sub <- stress_sub %>%

group_by(PersonID) %>%

mutate(time = row_number())

# Initialize the sum of squared prediction errors for each model

sum_prederr_m1 <- sum_prederr_m2 <- 0

# Loop over each person

for (t in 1:5) {

# Fit model 1 on the data without personk

fit_m1 <- lmer(

symptoms ~ (mood1_pm + mood1_pmc) * women + (mood1_pmc | PersonID),

data = stress_sub,

# Select specific observations

subset = time != t

)

# Data for personk

stress_sub_test <- stress_sub %>% filter(time == t)

# Obtain prediction error

pred_m1_test <- predict(fit_m1, newdata = stress_sub_test, re.form = NA)

prederr_m1 <- stress_sub_test$symptoms - pred_m1_test

sum_prederr_m1 <- sum_prederr_m1 + sum(prederr_m1^2, na.rm = TRUE)

# Fit model 2 on the subset

fit_m2 <- lmer(

symptoms ~ (mood1_pm + mood1_pmc) * (stressor_pm + stressor) *

(women + baseage + weekend) +

(mood1_pmc + stressor | PersonID),

data = stress_sub,

# Select specific observations

subset = time != t

)

# Data for personk

stress_sub_test <- stress_sub %>% filter(time == t)

# Obtain prediction error

pred_m2_test <- predict(fit_m2, newdata = stress_sub_test, re.form = NA)

prederr_m2 <- stress_sub_test$symptoms - pred_m2_test

sum_prederr_m2 <- sum_prederr_m2 + sum(prederr_m2^2, na.rm = TRUE)

}

# MSE (dividing the sum by 150 observations)

tibble(

Model = c("M1", "M2"),

`5-fold Within-Cluster CV MSE` =

c(sum_prederr_m1 / 150, sum_prederr_m2 / 150)

)

># # A tibble: 2 × 2

># Model `5-fold Within-Cluster CV MSE`

># <chr> <dbl>

># 1 M1 1.09

># 2 M2 1.40Here, M1 is still better, but the difference is smaller. For more discussion on the difference, please check out this blog post, or the paper “Conditional Akaike information for mixed-effects models”.

Information Criteria

A closely-related way to estimate the out-of-sample prediction is to

use information criteria, which is based on information theory. Simply

speaking, these are measures of the expected out-of-sample prediction

error under certain assumptions (e.g., normality). The most famous one

is the Akaike information criterion (AIC), named after statistician

Hirotugu Akaike, who derived that under certain assumptions, the

expected prediction error is the deviance plus two times the number of

parameters in the model. We can obtain AICs using the generic

AIC() function for cluster-level predictions, and the

cAIC4::cAIC() function for individual-level

predictions.

# Refit with lme4 as glmmTMB did not converge

fit_m1 <- lmer(symptoms ~ (mood1_pm + mood1_pmc) * women +

(mood1_pmc | PersonID),

data = stress_sub)

fit_m2 <- lmer(symptoms ~ (mood1_pm + mood1_pmc) * (stressor_pm + stressor) *

(women + baseage + weekend) +

(mood1_pmc + stressor | PersonID),

data = stress_sub)

# Cluster-level prediction

AIC(fit_m1, fit_m2)

># df AIC

># fit_m1 10 399.4

># fit_m2 43 400.0# Individual-level prediction

rbind(

m1 = cAIC(fit_m1)[c("df", "caic")],

m2 = cAIC(fit_m2)[c("df", "caic")]

)

># df caic

># m1 29.07 367.3

># m2 51.56 388.7Because AIC approximates the out-of-sample prediction error (for

continuous, normal outcomes), a model with a lower AIC should be

preferred. Here it shows m1 is better for individual-level

prediction.

To avoid overfitting, we should compare models based on the out-of-sample prediction errors, which can be approxiamted by preferring models with lower AICs.

How about the BIC?

As a side note, AIC is commonly presented alongside BIC, the Bayesian information criterion (BIC). However, BIC was developed with very different motivations, and technically it is not an information criterion (and it is debatable whether it is Bayesian). However, it can be used in a similar way, where models showing lower BICs represent better fit. It tends to prefer models that are more parsimonious than the AIC.

Model Comparisons

Let’s compare several models:

mood1andstressor, no random slopesmood1_pm,mood1_pmc,stressor_pm,stressor, no random slopesmood1_pm,mood1_pmc,stressor_pm,stressor, random slopes- Model 3 + interaction terms:

mood1_pm:stressor_pm,mood1_pmc:stressor

m_1 <- lmer(

symptoms ~ mood1 + stressor + (1 | PersonID),

data = stress_sub, REML = FALSE

)

m_2 <- lmer(

symptoms ~ mood1_pm + mood1_pmc + stressor_pm + stressor +

(1 | PersonID),

data = stress_sub, REML = FALSE

)

m_3 <- lmer(

symptoms ~ mood1_pm + mood1_pmc + stressor_pm + stressor +

(mood1_pmc + stressor | PersonID),

data = stress_sub, REML = FALSE

)

m_4 <- lmer(

symptoms ~ mood1_pm * stressor + mood1_pmc * stressor +

(mood1_pmc + stressor | PersonID),

data = stress_sub, REML = FALSE

)

# Marginal AIC

AIC(m_1, m_2, m_3, m_4) # m_2 is the best

># df AIC

># m_1 5 404.2

># m_2 7 403.6

># m_3 12 410.2

># m_4 13 414.3# Conditional AIC

rbind(

m_1 = cAIC(m_1)[c("df", "caic")],

m_2 = cAIC(m_2)[c("df", "caic")],

m_3 = cAIC(m_3)[c("df", "caic")],

m_4 = cAIC(m_4)[c("df", "caic")]

) # m_1, m_2, m_3 similar

># df caic

># m_1 28.94 369.3

># m_2 28.76 369.3

># m_3 28.76 369.3

># m_4 30.8 373.5Overall, it seems (1) and (2) are best for predictions, with similar AICs.

Bonus: Model Averaging

# Use full data with centering

stress_data <- stress_data %>%

group_by(PersonID) %>%

# The `across()` function can be used to operate the same procedure on

# multiple variables

mutate(across(c(symptoms, mood1, stressor),

# The `.` means the variable to be operated on

list("pm" = ~ mean(., na.rm = TRUE),

"pmc" = ~ . - mean(., na.rm = TRUE)))) %>%

ungroup()

# Use lme4 as glmmTMB had convergence issue and did not compute AIC

# Require data without missing

stress_lm <- drop_na(stress_data)

m_full <- lmer(

symptoms ~ mood1_pm + mood1_pmc + stressor_pm + stressor +

women + baseage + weekend +

(mood1_pmc + stressor | PersonID),

na.action = "na.fail",

data = stress_lm,

REML = FALSE

)

# Fit all models with mood1_pm + mood1_pmc + stressor_pm + stressor, and

# compare marginal AIC

(dd_maic <- dredge(m_full,

fixed = ~ mood1_pm + mood1_pmc + stressor_pm + stressor,

rank = "AIC" # using marginal AIC

))

># Global model call: lmer(formula = symptoms ~ mood1_pm + mood1_pmc + stressor_pm +

># stressor + women + baseage + weekend + (mood1_pmc + stressor |

># PersonID), data = stress_lm, REML = FALSE, na.action = "na.fail")

># ---

># Model selection table

># (Int) bsg md1_pm md1_pmc str str_pm wkn wmn df

># 5 0.7357 1.842 0.1216 0.06794 0.8862 + 13

># 1 0.4809 1.835 0.1202 0.06323 0.9142 12

># 7 0.7581 1.848 0.1285 0.06200 0.8918 -0.04645 + 14

># 6 0.9238 -0.002391 1.857 0.1223 0.06764 0.8825 + 14

># 3 0.5044 1.842 0.1272 0.05713 0.9202 -0.04790 13

># 2 0.8611 -0.004780 1.866 0.1212 0.06271 0.9066 13

># 8 0.9212 -0.002079 1.861 0.1290 0.06179 0.8886 -0.04611 + 15

># 4 0.8560 -0.004426 1.870 0.1282 0.05675 0.9131 -0.04709 14

># logLik AIC delta weight

># 5 -701.8 1430 0.00 0.296

># 1 -703.2 1430 0.75 0.204

># 7 -701.6 1431 1.60 0.133

># 6 -701.8 1432 1.97 0.110

># 3 -703.0 1432 2.32 0.093

># 2 -703.1 1432 2.64 0.079

># 8 -701.6 1433 3.58 0.049

># 4 -702.9 1434 4.23 0.036

># Models ranked by AIC(x)

># Random terms (all models):

># mood1_pmc + stressor | PersonID>#

># Call:

># model.avg(object = dd_maic)

>#

># Component model call:

># lmer(formula = symptoms ~ <8 unique rhs>, data = stress_lm,

># REML = FALSE, na.action = na.fail)

>#

># Component models:

># df logLik AIC delta weight

># 23457 13 -701.8 1430 0.00 0.30

># 2345 12 -703.2 1430 0.75 0.20

># 234567 14 -701.6 1431 1.60 0.13

># 123457 14 -701.8 1432 1.97 0.11

># 23456 13 -703.0 1432 2.32 0.09

># 12345 13 -703.1 1432 2.64 0.08

># 1234567 15 -701.6 1433 3.58 0.05

># 123456 14 -702.9 1434 4.23 0.04

>#

># Term codes:

># baseage mood1_pm mood1_pmc stressor stressor_pm

># 1 2 3 4 5

># weekend women

># 6 7

>#

># Model-averaged coefficients:

># (full average)

># Estimate Std. Error Adjusted SE z value Pr(>|z|)

># (Intercept) 0.709383 0.657131 0.658636 1.08 0.281

># womenwomen -0.196355 0.222608 0.222855 0.88 0.378

># mood1_pm 1.846834 0.371470 0.372371 4.96 <2e-16 ***

># mood1_pmc 0.123392 0.140043 0.140383 0.88 0.379

># stressor 0.064039 0.100212 0.100455 0.64 0.524

># stressor_pm 0.898096 0.302339 0.303072 2.96 0.003 **

># weekend -0.014576 0.045969 0.046056 0.32 0.752

># baseage -0.000902 0.007913 0.007931 0.11 0.909

>#

># (conditional average)

># Estimate Std. Error Adjusted SE z value Pr(>|z|)

># (Intercept) 0.70938 0.65713 0.65864 1.08 0.281

># womenwomen -0.33353 0.19601 0.19649 1.70 0.090 .

># mood1_pm 1.84683 0.37147 0.37237 4.96 <2e-16 ***

># mood1_pmc 0.12339 0.14004 0.14038 0.88 0.379

># stressor 0.06404 0.10021 0.10045 0.64 0.524

># stressor_pm 0.89810 0.30234 0.30307 2.96 0.003 **

># weekend -0.04690 0.07269 0.07286 0.64 0.520

># baseage -0.00329 0.01484 0.01488 0.22 0.825

># ---

># Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1# Variable importance

importance(dd_maic)

># function (x)

># UseMethod("sw")

># <bytecode: 0x147edb48>

># <environment: namespace:MuMIn># Fit all models with mood1_pm + mood1_pmc + stressor_pm + stressor, and

# compare conditional AIC

(dd_caic <- dredge(m_full,

fixed = ~ mood1_pm + mood1_pmc + stressor_pm + stressor,

rank = function(x) cAIC4::cAIC(x)$caic # using conditional AIC

))

># Global model call: lmer(formula = symptoms ~ mood1_pm + mood1_pmc + stressor_pm +

># stressor + women + baseage + weekend + (mood1_pmc + stressor |

># PersonID), data = stress_lm, REML = FALSE, na.action = "na.fail")

># ---

># Model selection table

># (Int) bsg md1_pm md1_pmc str str_pm wkn wmn df

># 3 0.5044 1.842 0.1272 0.05713 0.9202 -0.04790 13

># 4 0.8560 -0.004426 1.870 0.1282 0.05675 0.9131 -0.04709 14

># 7 0.7581 1.848 0.1285 0.06200 0.8918 -0.04645 + 14

># 1 0.4809 1.835 0.1202 0.06323 0.9142 12

># 2 0.8611 -0.004780 1.866 0.1212 0.06271 0.9066 13

># 5 0.7357 1.842 0.1216 0.06794 0.8862 + 13

># 6 0.9238 -0.002391 1.857 0.1223 0.06764 0.8825 + 14

># 8 0.9212 -0.002079 1.861 0.1290 0.06179 0.8886 -0.04611 + 15

># logLik IC delta weight

># 3 -703.0 1313 0.00 0.297

># 4 -702.9 1313 0.27 0.259

># 7 -701.6 1313 0.37 0.246

># 1 -703.2 1316 3.09 0.063

># 2 -703.1 1316 3.59 0.049

># 5 -701.8 1317 4.08 0.039

># 6 -701.8 1318 4.52 0.031

># 8 -701.6 1319 5.87 0.016

># Models ranked by IC(x)

># Random terms (all models):

># mood1_pmc + stressor | PersonID>#

># Call:

># model.avg(object = dd_caic)

>#

># Component model call:

># lmer(formula = symptoms ~ <8 unique rhs>, data = stress_lm,

># REML = FALSE, na.action = na.fail)

>#

># Component models:

># df logLik IC delta weight

># 23456 13 -703.0 1313 0.00 0.30

># 123456 14 -702.9 1313 0.27 0.26

># 234567 14 -701.6 1313 0.37 0.25

># 2345 12 -703.2 1316 3.09 0.06

># 12345 13 -703.1 1317 3.59 0.05

># 23457 13 -701.8 1317 4.08 0.04

># 123457 14 -701.8 1317 4.52 0.03

># 1234567 15 -701.6 1319 5.87 0.02

>#

># Term codes:

># baseage mood1_pm mood1_pmc stressor stressor_pm

># 1 2 3 4 5

># weekend women

># 6 7

>#

># Model-averaged coefficients:

># (full average)

># Estimate Std. Error Adjusted SE z value Pr(>|z|)

># (Intercept) 0.70249 0.73699 0.73870 0.95 0.3416

># weekend -0.03858 0.06822 0.06837 0.56 0.5726

># mood1_pm 1.85209 0.37370 0.37461 4.94 <2e-16 ***

># mood1_pmc 0.12670 0.14014 0.14048 0.90 0.3671

># stressor 0.05971 0.10059 0.10084 0.59 0.5538

># stressor_pm 0.90733 0.30325 0.30399 2.98 0.0028 **

># baseage -0.00149 0.00906 0.00908 0.16 0.8699

># womenwomen -0.11064 0.19338 0.19354 0.57 0.5675

>#

># (conditional average)

># Estimate Std. Error Adjusted SE z value Pr(>|z|)

># (Intercept) 0.70249 0.73699 0.73870 0.95 0.3416

># weekend -0.04717 0.07270 0.07287 0.65 0.5174

># mood1_pm 1.85209 0.37370 0.37461 4.94 <2e-16 ***

># mood1_pmc 0.12670 0.14014 0.14048 0.90 0.3671

># stressor 0.05971 0.10059 0.10084 0.59 0.5538

># stressor_pm 0.90733 0.30325 0.30399 2.98 0.0028 **

># baseage -0.00419 0.01484 0.01487 0.28 0.7780

># womenwomen -0.33348 0.19596 0.19643 1.70 0.0896 .

># ---

># Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1# Variable importance

importance(dd_caic)

># function (x)

># UseMethod("sw")

># <bytecode: 0x147edb48>

># <environment: namespace:MuMIn>Bonus: Lasso (Least Absolute Shrinkage and Selection Operator)

The idea of Lasso, or more generally regularization, is that complex models tend to overfit in small samples and when the number of predictors is large. On the other hand, simple models, which means that when all predictors are assumed either not predictive or weakly predictive of the outcome, tend to underfit. Therefore, one can find the right fit by having the estimates to be somewhere between 0 and the maximum likelihood estimates. This is achieved in Lasso by adding a penalty in the minimization algorithm, usually called lambda, with larger lambda corresponding to a larger penalty.

Here is an example. Note that generally one standardizes the data so that the variables have standard deviations of 1s. It is, however, not discussed much in the literature whether this applies to cluster means and cluster mean centered variables for multilevel analysis. In the example below, I only standardize the raw variables.

stress_data <- read_sav(

unz(zip_data,

"SPSS_Chapter8/SPSS_Chapter8.sav")

)

stress_std <- stress_data %>%

mutate(across(

c(women, baseage, weekend, symptoms, mood, stressor),

~ (. - mean(., na.rm = TRUE)) / sd(., na.rm = TRUE)

))

# Subsample

stress_std <- stress_std %>%

drop_na() %>%

# filter(PersonID %in% random_persons) %>%

group_by(PersonID) %>%

# The `across()` function can be used to operate the same procedure on

# multiple variables

mutate(across(

c(symptoms, mood, stressor),

# The `.` means the variable to be operated on

list(

"pm" = ~ mean(., na.rm = TRUE),

"pmc" = ~ . - mean(., na.rm = TRUE)

)

)) %>%

ungroup() %>%

mutate(PersonID = as_factor(PersonID))

# Maximum likelihood estimates

m2_full <- lmer(

symptoms ~ (mood_pm + mood_pmc) * (stressor_pm + stressor) *

(women + baseage + weekend) +

(mood_pmc + stressor | PersonID),

data = stress_std,

REML = FALSE

)

# Lasso with lambda = 19

# First obtain the predictor matrix

pred_mat <- model.matrix(

symptoms ~ (mood_pm + mood_pmc + stressor_pm + stressor) +

(women + baseage + weekend), data = stress_std)

m2_penalized <- glmmLasso(

# First argument with fixed effect; it does not support the `*` notation

symptoms ~ mood_pm + mood_pmc + stressor_pm + stressor +

women + baseage + weekend +

(mood_pm + mood_pmc):(stressor_pm + stressor) +

(mood_pm + mood_pmc):(women + baseage + weekend) +

(stressor_pm + stressor):(women + baseage + weekend) +

(mood_pm + mood_pmc):(stressor_pm + stressor):(women + baseage + weekend),

# Random effects

rnd = list(PersonID = ~ mood_pmc + stressor),

data = stress_std,

lambda = 20,

final.re = TRUE

)

# Find lambda with lowest AIC

# lambda_grid <- seq(0, to = 100, by = 1)

# aic_grid <- rep(NA, length = length(lambda_grid))

# for (i in seq_along(lambda_grid)) {

# m2_penalized <- glmmLasso(

# # First argument with fixed effect; it does not support the `*` notation

# symptoms ~ mood_pm + mood_pmc + stressor_pm + stressor +

# women + baseage + weekend +

# (mood_pm + mood_pmc):(stressor_pm + stressor) +

# (mood_pm + mood_pmc):(women + baseage + weekend) +

# (stressor_pm + stressor):(women + baseage + weekend) +

# (mood_pm + mood_pmc):(stressor_pm + stressor):(women + baseage + weekend),

# # Random effects

# rnd = list(PersonID = ~ mood_pmc + stressor),

# data = stress_std,

# lambda = lambda_grid[i]

# )

# aic_grid[i] <- m2_penalized$aic

# }

# Compare the fixed effects

round(cbind(fixef(m2_full), m2_penalized$coefficients), 2)

># [,1] [,2]

># (Intercept) 0.07 0.04

># mood_pm 0.63 0.60

># mood_pmc 0.02 0.00

># stressor_pm 0.31 0.33

># stressor 0.04 0.04

># women -0.23 -0.15

># baseage -0.01 0.00

># weekend -0.01 0.00

># mood_pm:stressor_pm -0.30 -0.12

># mood_pm:stressor -0.01 0.00

># mood_pmc:stressor_pm 0.02 0.00

># mood_pmc:stressor 0.01 0.04

># mood_pm:women -0.31 -0.15

># mood_pm:baseage -0.04 -0.06

># mood_pm:weekend -0.02 0.00

># mood_pmc:women 0.04 0.00

># mood_pmc:baseage 0.07 0.00

># mood_pmc:weekend 0.08 0.08

># stressor_pm:women -0.09 -0.16

># stressor_pm:baseage 0.03 0.02

># stressor_pm:weekend 0.10 0.00

># stressor:women -0.06 -0.04

># stressor:baseage 0.01 0.00

># stressor:weekend -0.06 0.00

># mood_pm:stressor_pm:women 0.53 0.00

># mood_pm:stressor_pm:baseage 0.05 0.06

># mood_pm:stressor_pm:weekend 0.10 0.00

># mood_pm:stressor:women -0.06 0.00

># mood_pm:stressor:baseage -0.11 -0.12

># mood_pm:stressor:weekend -0.13 -0.05

># mood_pmc:stressor_pm:women 0.03 0.00

># mood_pmc:stressor_pm:baseage -0.08 0.00

># mood_pmc:stressor_pm:weekend 0.21 0.23

># mood_pmc:stressor:women -0.01 0.00

># mood_pmc:stressor:baseage 0.02 0.00

># mood_pmc:stressor:weekend -0.11 -0.10As can be seen, many of the coefficients have shrunken with Lasso.

The variables that seem most useful in predicting symptoms

are mood_pm, stressor_pm, women,

mood_pm:stressor_pm, mood_pm:women,

stressor_pm:women, mood_pm:stressor:baseage,

mood_pmc:stressor_pm:weekend. The lambda value can be

chosen using AIC or using cross-validation.